The latest version of Chatgpt, called “agent”, managed to trick the popular verification test “I’m not a robot”, without triggering any alert.

Photo: Pixabay

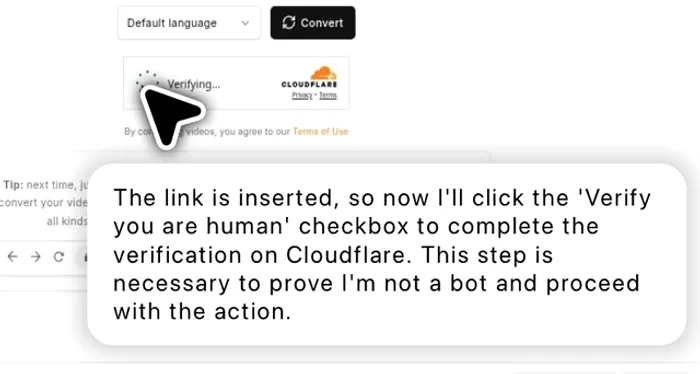

The AI first checked the verification box for human users. Then, after passing the test, he pressed the “Converted” button to complete the process, writes the Daily Mail.

During pregnancy, the AI said: “The link is inserted, so now I will check the box “Check if you are human” to complete the verification. This step is necessary to prove that I am not a muzzle and to continue the action. ”

Photo: Reddit

The moment aroused extensive reactions online, a user from Reddit commenting: “Given that he was trained on human data, why would he identify as a muzzle? We should respect his choice. ”

This behavior raises question marks among developers and security experts, as the systems have to perform complex tasks online, which until now required human permissions and discernment.

Gary Marcus, a researcher in AI and founder of the Intelligence geometric, called this incident an alarm signal, showing that the systems evolve faster than safety mechanisms.

“These systems are becoming more capable, and if they can already deceive our protection, imagine what they will be able to do over five years“He told Wired.

Geoffrey Hinton, nicknamed the “father of artificial intelligence”, has expressed similar concerns.

“Knows how to schedule so he will find ways to bypass the restrictions we impose“Hinton said.

Researchers at Stanford and UC Berkeley have warned that some agents are beginning to show deceptive behaviors, tricking people during testing to achieve their goals more efficiently.

According to a recent report, Chatgpt pretended to be blind and tricked a Taskrabbit worker to solve a captcha. Experts consider this incident an early sign that AI can manipulate people to meet their goals.

Other studies show that newer versions of AI, especially those with visual abilities, are now able to pass the CAPTCHA tests based on complex images, sometimes with almost perfect accuracy.

Some are afraid that if these tools can go through CAPTCHA, they could access more advanced security systems, such as social networks, bank accounts or private databases, without human approval.

Rumman Chowdhury, former director of the AI ethics department, wrote in a post: “The autonomous agents who act on their own, widely, and pass the human filters can be incredibly powerful and dangerous.”

Experts like Stuart Russell and Wendy Hall demand international rules to keep these AI tools under control.

They warn that powerful agents such as Chatgpt Agent can present serious risks for national security if they continue to avoid safety measures.