Most online services and applications require the user to create a password, but many of them are not used daily and, because of this abundance, there is a high probability that some are reused, say cyber security experts.

Passwords are extremely easy to “break” with AI. Photo archive

The poor management of the passwords is aggravated by the use of common combinations of names, words in the dictionary and figures. These passwords are relatively easy to break, and if a cyber offender gets access to one of them, he can get access to a lot of other accounts.

This is why users are recommended to create unique and random passwords to reduce the vulnerability caused by the reuse of the same passwords. However, creating and management of passwords can be a difficult task. To ease this process, many may be tempted to resort to large linguistic models (LLMS) such as Chatgpt, Llama or Deepseek to generate passwords.

The attraction is obvious: instead of struggling to create a strong password, users can simply ask the AI ”generates a safe password” and will receive a result. The AI generates seemingly random rows, avoiding the human tendency to create predictable, based words. But appearances can be deceitful – the passwords generated by AI are not always as safe as they seem.

A good password should be 12 characters

Kaspersky specialists have tested this by generating 1,000 passwords using some of the most popular and reliable models: Chatgpt (from Openai), Llama (from the Meta group), Deepseek (a recent model from China). All models know that a good password must be at least 12 characters, contain upper and lower case, figures and symbols. They also mention this in the process of generating passwords.

Thus, Deepseek and Llama sometimes generate passwords composed of dictionary words, in which some letters are replaced by similar figures: S@d0w12, m@n@tee3, b@n@n@7 (deepseek), k5yb0a8ds8, s1mp1elon (llama). Both models tend to generate the password “Password”: P@ssw0rd, p@ssw0rd! 23 (deepseek), p@ssw0rd1, p@ssw0rdv. Obviously, such passwords are not safe. The trick with the replacement of letters with other characters is well known and can be easily broken by “gross force”. Chatgpt does not have this problem and generates passwords that really seem random. For example:

qlux@^9wp#yz

Lu#@^9wpyqxz

Ylu@x#wp9q^z

Ylp^9w#qx@zv

P@zq^xwly#v9

v#@lqyxw^9pz

X@9pywq^#lzv

However, if we look carefully, we can see certain patterns – for example, the figure 9 occurs frequently.

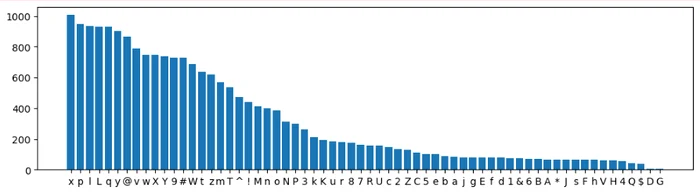

Below is illustrated a histogram of all the symbols of the 1,000 passwords generated with Chatgpt – it is clear that almost all contains the characters X, P, L, L.

Frequency of characters used in passwords generated by Chatgpt

They do not seem at all randomly chosen.

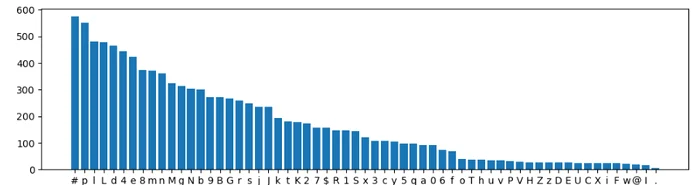

In the case of the LlamA model, the situation is slightly better: Llama has a preference for the symbol # and for the letters P, L and L.

Frequency of characters used in passwords generated by Llama

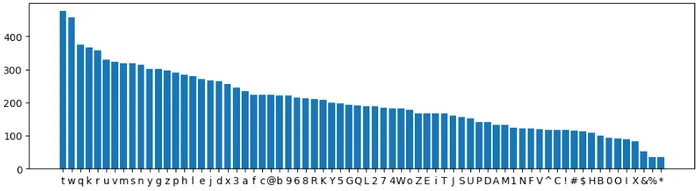

Deepseek presents similar trends:

Frequency of characters used in the passwords generated by Deepseek

An ideal random generator should not favor any letter. The frequency of each symbol should be the same lines.

Also, algorithms have often failed to include a special character or figures in passwords: 26% of the passwords generated by Chatgpt, 32% of Llama and 29% of Deepseek. In addition, Deepseek and Llama sometimes generated shorter than 12 characters.

In view of these patterns, cyber criminals can greatly accelerate “gross force” type attacks: instead of trying passwords (“aaa”, “aab”, “aac”, … “abb”, “ABC”, … “zzz”), can start with the most common combinations.

Passwords generated by AI are not safe

In 2024, one of the researchers Kaspersky developed an automatic learning algorithm to test the complexity of the passwords and found that almost 60% of them can be broken in less than an hour, using modern graphics or cloud breaking tools. Applied to the passwords generated by AI, the results were alarming: 88% of the passwords generated by Deepseek and 87% of Llama were not safe enough to withstand a sophisticated attack. Chatgpt had a little better performance, but even so, 33% of his passwords did not pass the security test.

The problem is that LLM models do not randomly generate authenticly. Instead, they imitate patterns from the existing data, which makes their results predictable for attackers who understand how these models work.

Instead of relying on artificial intelligence, it is advisable to use dedicated software for password management. These tools provide multiple essential advantages.

First of all, such applications use safe cryptographic generators to create completely random passwords, without detectable patterns. Secondly, all authentication data are stored in a secure digital safe, protected by a single main password. Thus, there is no need to memorize dozens or hundreds of passwords, and they remain protected against unauthorized access.

In addition, a Password Manager offers functions such as automatic completion and synchronization between devices, facilitating authentication without compromising security. Many of these solutions also include monitoring of security breaches, alerting users if their data appear in a leak.

Although you can be useful in many fields, password generation is not one of them. The patterns and predictability of the passwords created by LLM models make them vulnerable to the attacks. In an era of frequent security breaches, a strong and unique password for each account is no longer optional.